Impact

- 60 percent reduction in grading time, giving teachers more time to teach and mentor.

- More consistent evaluation across students and graders, improving fairness and transparency.

- Actionable feedback for students, showing where marks were lost and how to improve.

Client overview

Arivihan is an edtech company focused on improving education outcomes in India. They set out to modernize how CBSE board exam style answers and mock tests are evaluated by automating subjective grading and feedback.

The problem

CBSE style grading is high effort and hard to scale:

- Subjective answers take time to evaluate, especially at school scale.

- Inconsistency is common, with different evaluators awarding different marks for similar answers.

- Growing test volume makes manual grading a bottleneck for schools and coaching programs.

Arivihan needed a system that could grade consistently against a marking scheme, at scale, while still giving useful feedback.

Goals

- Build an AI powered grader for CBSE board exams and mock tests.

- Ensure grading is consistent and fair, aligned to a predefined marking scheme.

- Generate detailed, student-friendly feedback that explains deductions and improvement steps.

- Integrate cleanly into Arivihan’s existing platform via APIs.

The solution

Krazimo built a scalable AI grading system that takes in the question, expected answer structure, and marking scheme, then evaluates student responses to produce both marks and feedback.

Key components:

- Marking scheme based grading: Evaluates subjective answers against defined criteria, not vague similarity.

- Deduction explanations: Highlights where marks were lost and why.

- Personalized improvement guidance: Actionable suggestions aligned to the rubric.

- Reporting: Detailed student and teacher reports to track performance and identify common misconceptions.

- Integration APIs: Designed for drop-in use inside Arivihan’s edtech workflows.

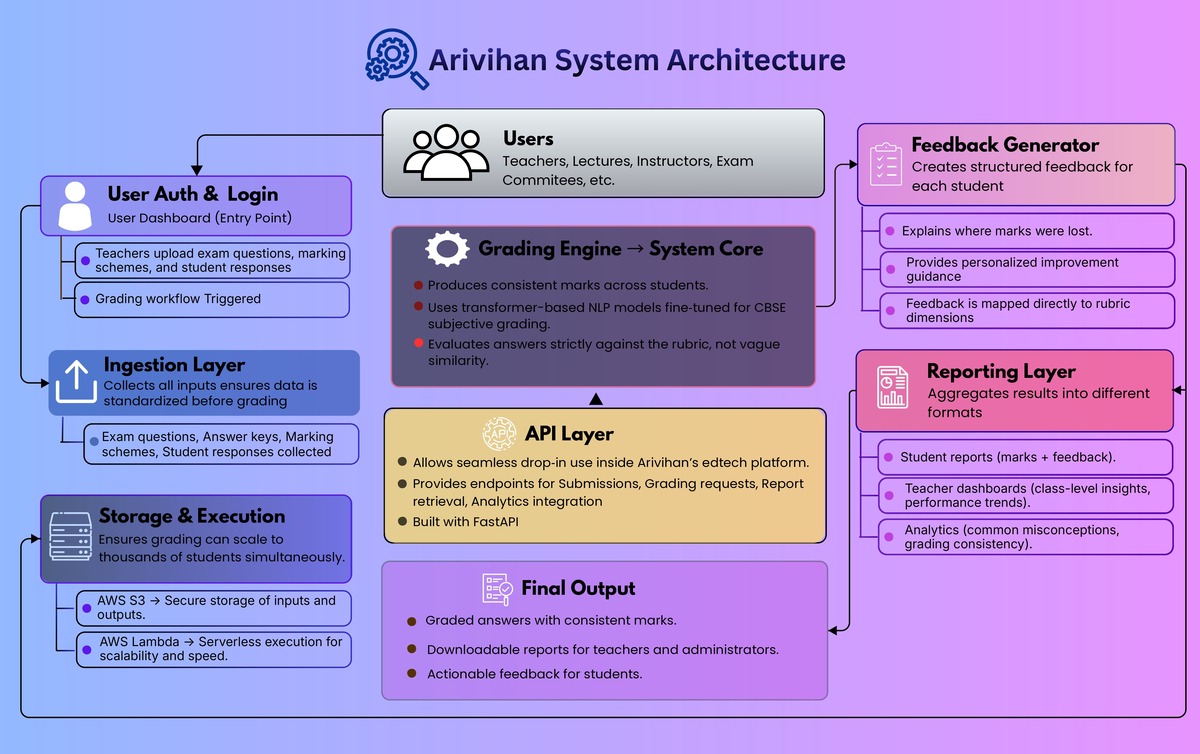

Architecture overview

- Ingestion layer: Accepts questions, answer keys, marking schemes, and student responses.

- Grading engine: Applies transformer-based NLP models fine-tuned for subjective grading, guided by the rubric and expected points.

- Feedback generator: Produces structured feedback mapped to rubric dimensions (what was missing, what was incorrect, what to do next).

- Reporting layer: Aggregates results for student reports, teacher dashboards, and class-level insights.

- API layer: FastAPI endpoints for submission, grading, report retrieval, and analytics.

- Storage and execution: AWS S3 for secure storage of inputs and outputs; AWS Lambda for scalable, serverless execution.

Implementation snapshot

- Backend: Python with FastAPI

- Execution: AWS Lambda

- Storage: AWS S3

- Modeling approach: Transformer-based NLP models fine-tuned for CBSE-style subjective grading

- Delivery timeline: 4 months

Outcome

The AI grader significantly improved Arivihan’s evaluation workflow:

- Grading time dropped by about 60 percent.

- Evaluation became more consistent across students and test cycles.

- Students received clearer, more actionable feedback to improve future answers.

This project shows how AI can modernize education workflows when it is tied to a clear rubric and designed for scale. For Arivihan, the result was faster grading, fairer evaluation, and better feedback—without increasing teacher workload.