Client Overview

Dr. Jason Emer runs a high-demand aesthetic medicine practice in Beverly Hills, with patient engagement spanning web inquiries, phone calls, SMS, email campaigns, clinical visits, and a high-volume Instagram presence.

The practice needed to scale operations without losing the premium, high-touch experience that drives conversions and retention.

The Problem

The practice’s growth created predictable operational friction:

- Communications were fragmented across Salesforce, phones, email, and Instagram, with no single source of truth.

- Context was hard to recover (past calls, prior quotes, appointment history, clinical notes, consent status).

- Inbound leads could slip through cracks, especially when response SLAs were missed.

- Call recordings existed, but weren’t actionable without fast, structured transcription and summaries.

- Instagram demand was overwhelming, with patient DMs often answered late or not at all.

- Clinical and operational systems lived separately, limiting staff’s ability to act quickly and consistently.

Goals

- Create a single operational cockpit for staff: leads, accounts, communications, scheduling, notes, consents, reporting, and analytics.

- Make every conversation searchable and useful (calls, SMS, email, and social).

- Reduce “lost lead” leakage with rules and monitoring.

- Automate the front door of patient discovery (especially Instagram) while staying on-brand and safe.

- Integrate cleanly with existing systems rather than forcing a rip-and-replace.

The Solution

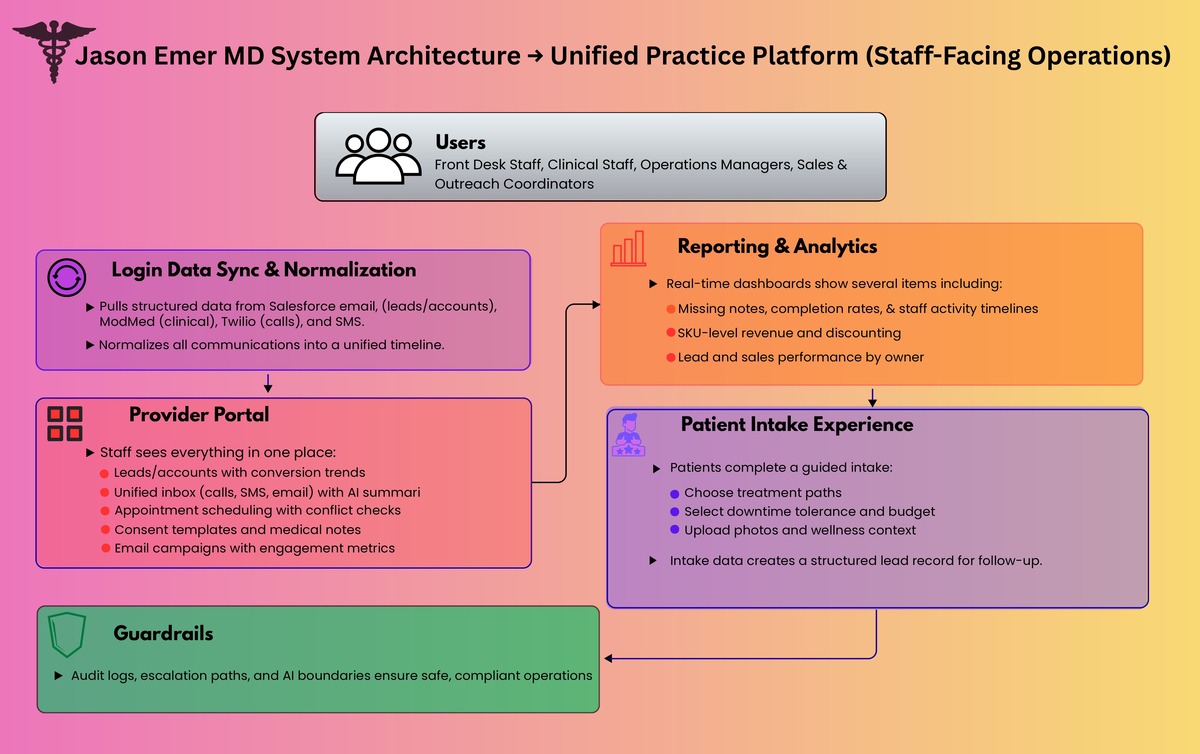

We built two connected systems that work as one operating layer:

- Unified Practice Platform (Provider Portal + Patient Intake Experience)

- AI Concierge for Instagram and Live Chat

Together, they turn inbound interest into structured intake, routed follow-ups, and measurable operational throughput.

Solution 1: Unified Practice Platform

What staff sees: one place to run the business

Leads + Accounts

- Leads and converted accounts are pulled from Salesforce on a frequent sync cadence and shown in purpose-built views.

- Team performance views show leads by owner, conversion rate trends, and top procedures.

- A “no-cracks” layer highlights uncontacted leads in time windows (example: 3 to 12 hours) so managers can intervene.

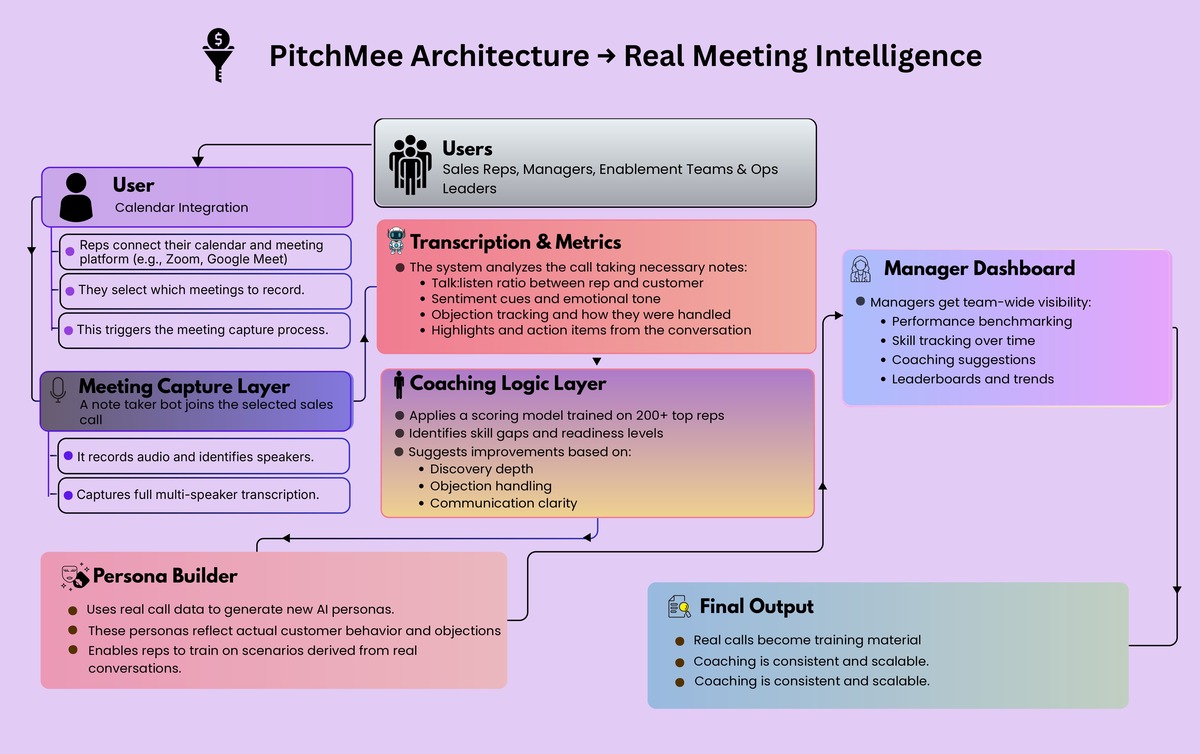

Unified Communications Inbox

- A single communications view aggregates:

- SMS

- calls

- email history

- SMS

- Call history includes transcriptions and AI summaries so staff can read what happened instead of hunting through recordings.

Email Campaigns

- Staff can run outreach directly from the portal with metrics like sends, opens, and replies.

Operations

- Appointments: schedule and manage appointments with operational constraints (example: avoid same-day cross-city conflicts).

- Consents: create reusable consent templates, assign them, and track completion.

- Medical notes: surface patient notes and workflows around completion.

- Appointment instructions: pre-built instruction templates per appointment type, sent ahead of visits.

Reporting and Risk Controls

- A reports layer was built to answer urgent operational questions quickly (example: missing notes by time period, completion rates, and breakdowns by status and owner).

Revenue and Performance Analytics

- A “single pane” analytics dashboard provides:

- product and SKU-level performance

- discounting and reason codes

- activity timelines per staff member

- lead and sales overviews by owner and time window

- product and SKU-level performance

- The point is not generic BI—it is clinic-specific decision surfaces.

What patients see: a guided intake experience

We built a guided, branded intake flow that captures structured data without feeling like a form dump:

- Choose a “path” (two experiences)

- Use an interactive body selector to identify focus areas

- Select intensity and downtime tolerance

- Provide key parameters (budget range, sensitivity, skin type)

- Provide optional wellness context (if applicable)

- Upload photos (front/back) for additional context

- Submit details and create a lead record for follow-up

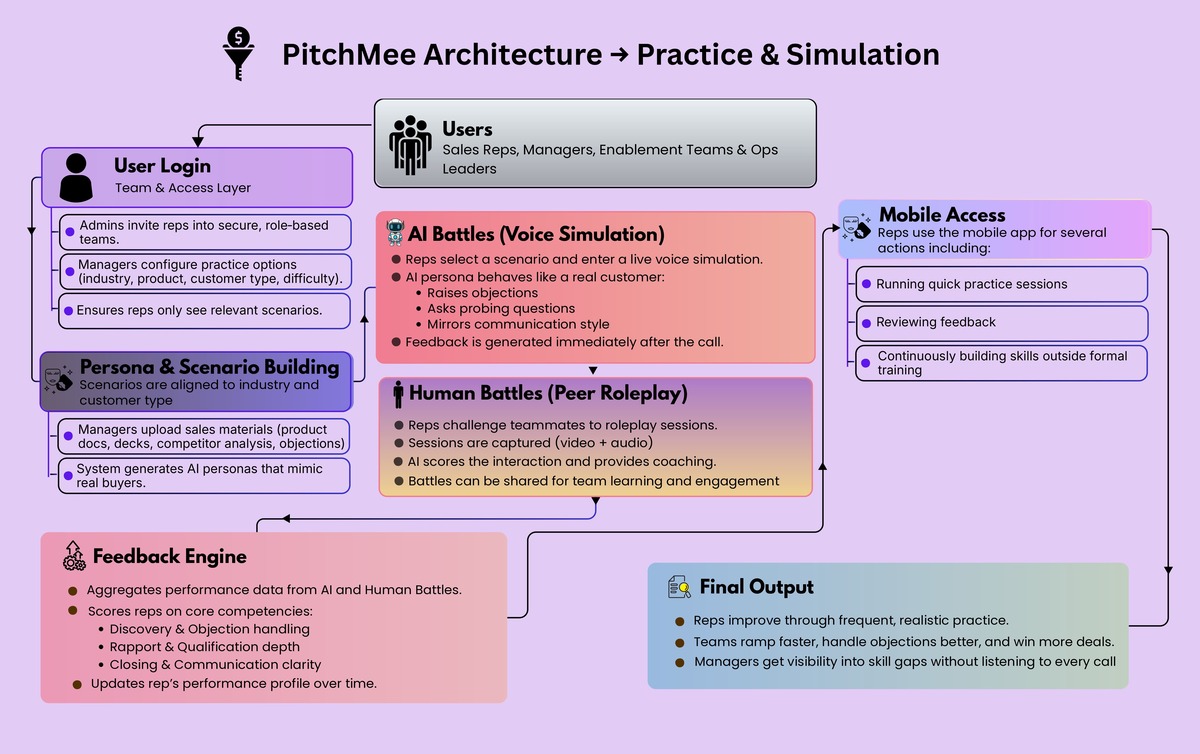

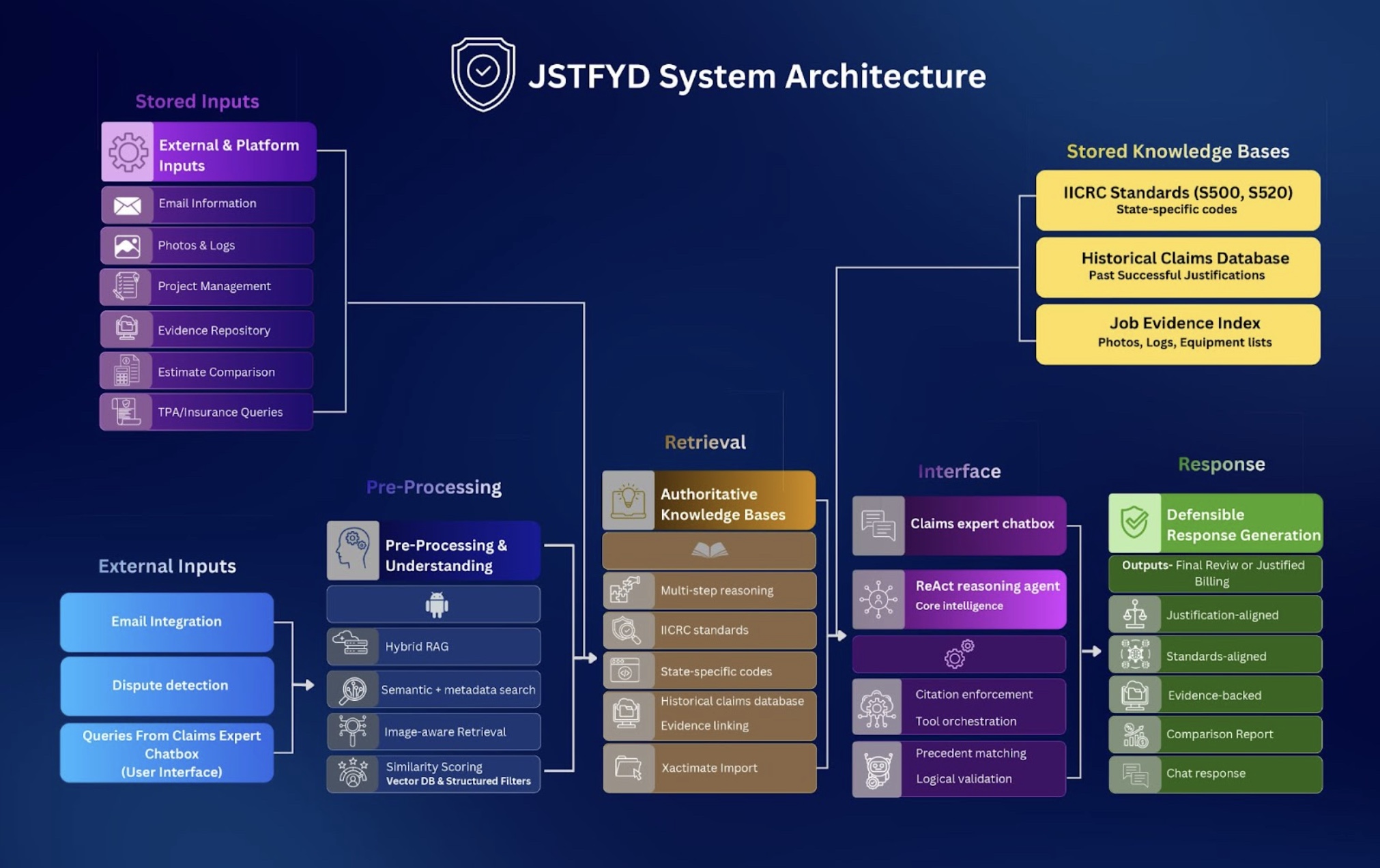

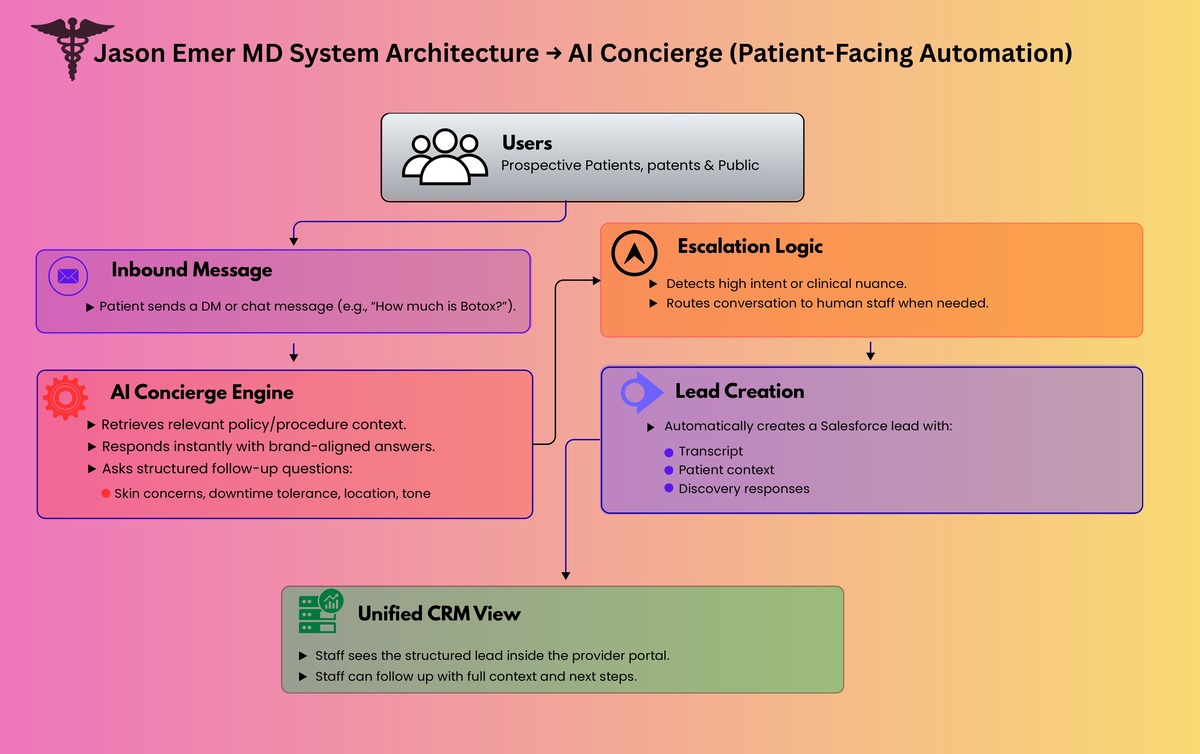

Solution 2: AI Concierge for Instagram and Live Chat

Instagram is a top-of-funnel channel for modern aesthetic practices, but it is operationally brutal at scale. We built an AI concierge that can:

- answer common questions instantly

- guide patients through a structured discovery conversation

- ask the right follow-up questions (skin concerns, downtime tolerance, skin tone, location, etc.)

- stay aligned to the brand voice (premium, patient-first)

- escalate to humans when intent is high or clinical nuance is needed

- create Salesforce leads automatically when the patient asks to be contacted

This turns Instagram from “busy inbox” into a qualified lead pipeline with context.

Architecture Overview

You mentioned you already have an architecture diagram—this is the narrative that should sit next to it.

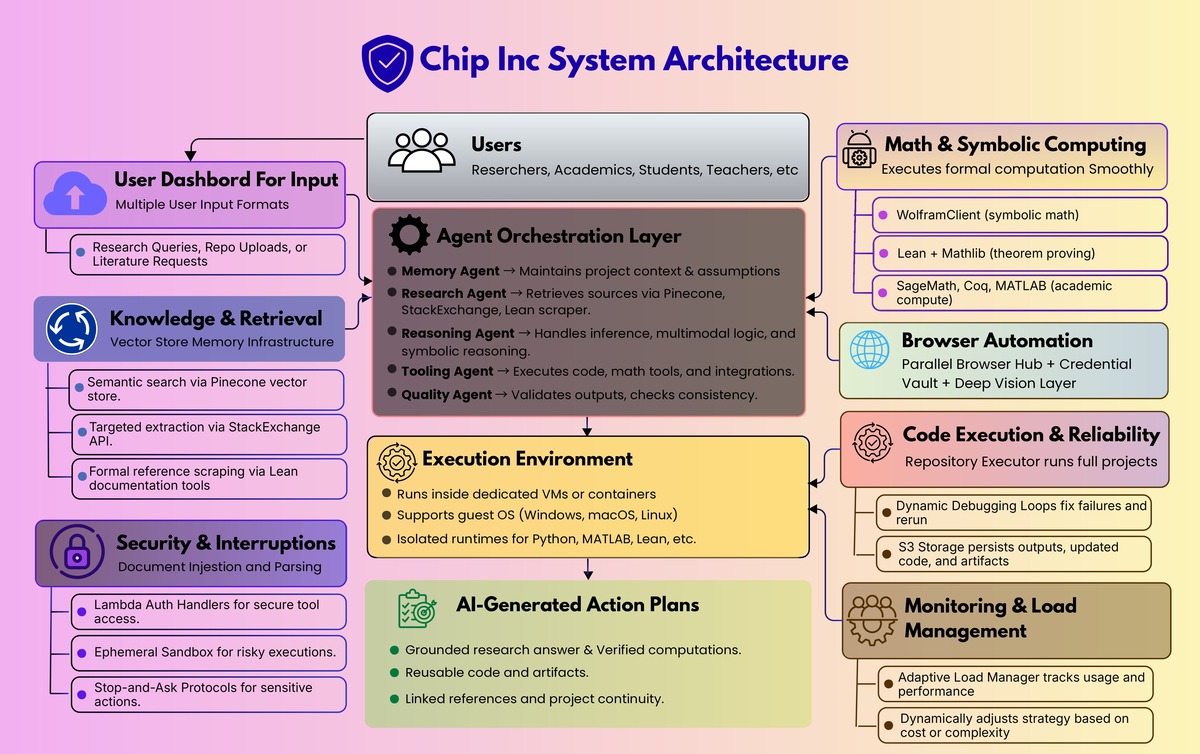

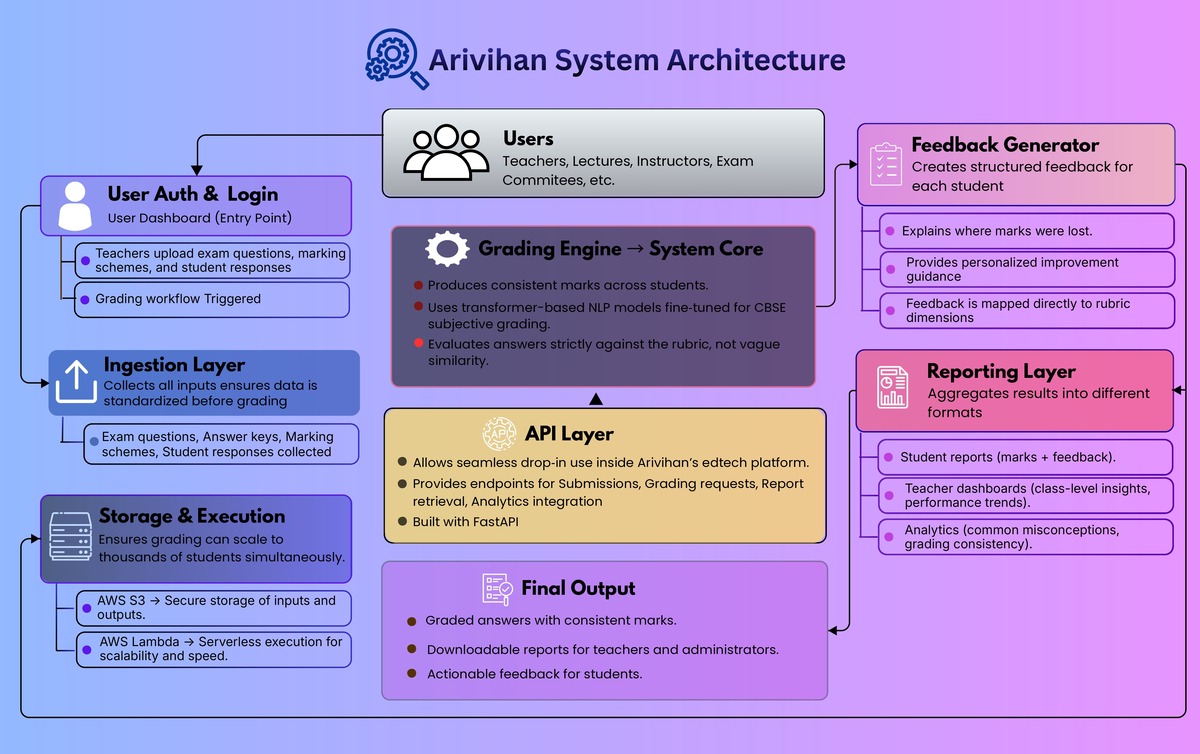

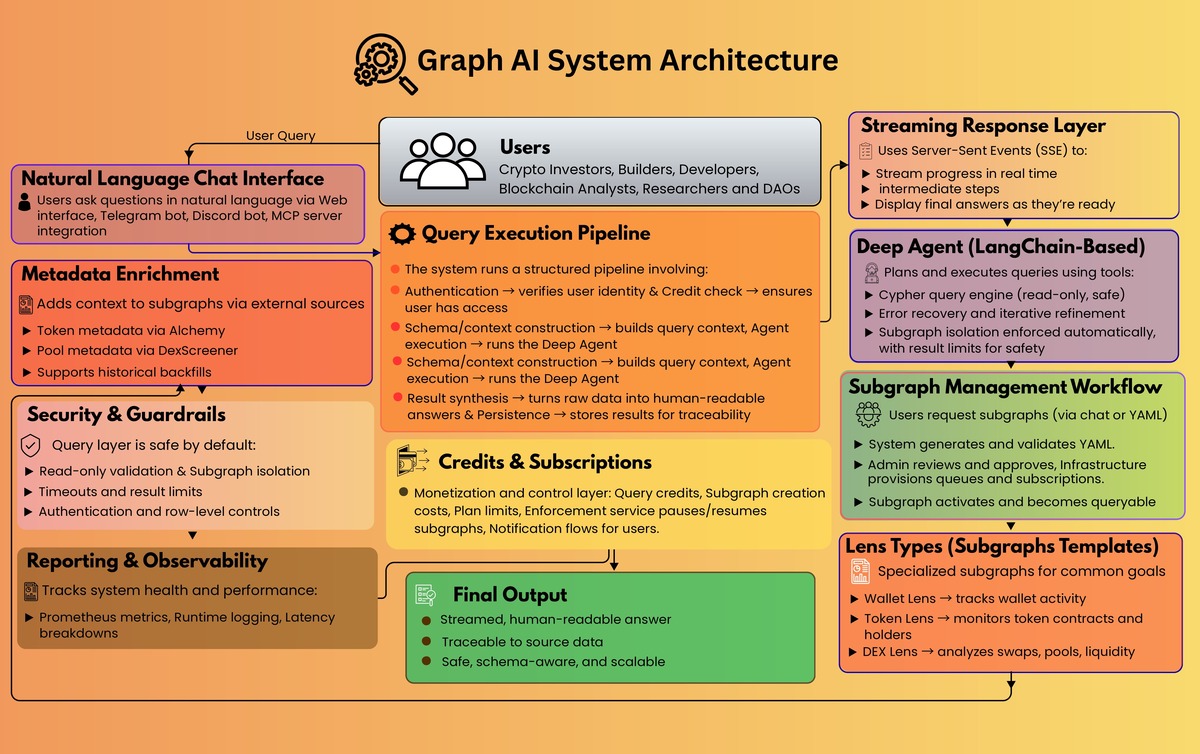

High-level design

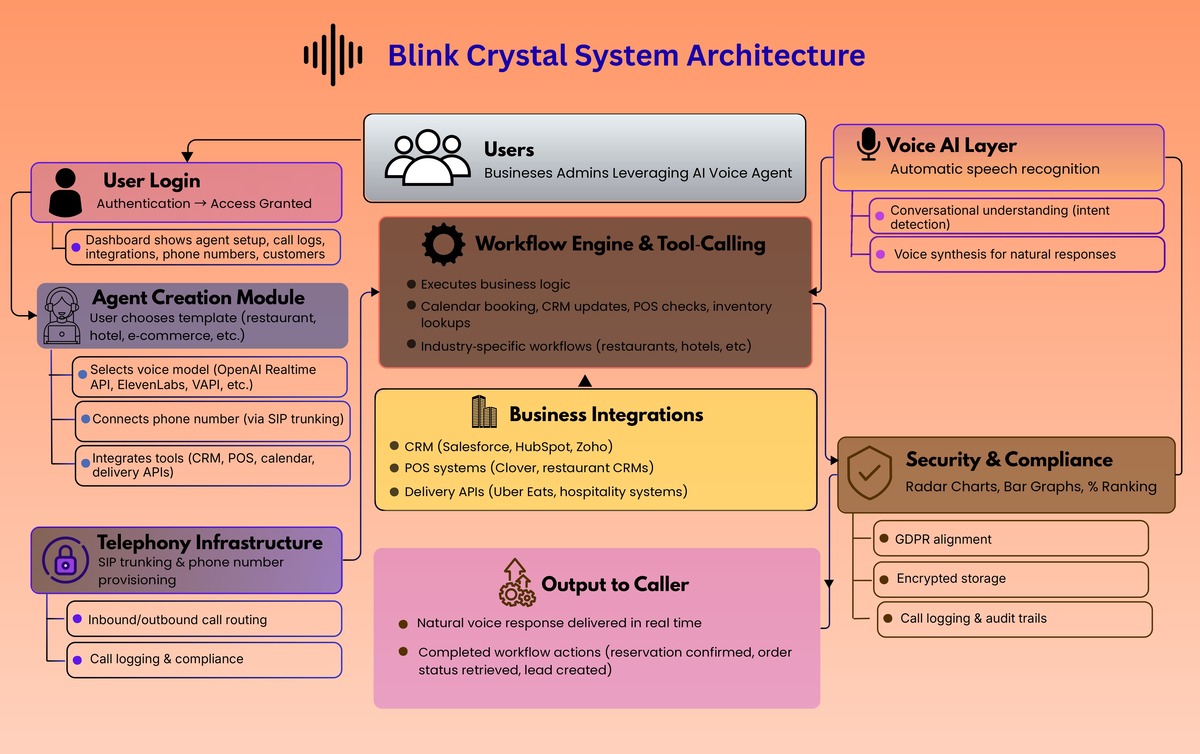

1) Data + systems of record

- Salesforce as the operational backbone for leads, accounts, quotes, ownership, and activity

- ModMed (EHR) as the clinical system of record

- Twilio (or equivalent) for telephony and call recording

- ManyChat (or equivalent) as the Instagram gateway

2) Unified ingestion and normalization

- Scheduled sync pulls new Salesforce and operational records into the portal

- Communications events (calls, SMS, email) are normalized into a consistent timeline model

- Clinical context is joined where appropriate to give staff a richer patient view

3) AI processing pipelines

- Call pipeline: recording → transcription → speaker separation → summary → indexed to patient/activity

- Chat pipeline: message → retrieve policy/procedure context → response generation → safe delivery → logged transcript → optional CRM lead creation

4) Presentation layer

- Provider portal for operations and analytics

- Patient intake experience that structures demand before it hits the team

5) Guardrails

- Audit logs for every interaction

- Clear boundaries on what the AI can and cannot claim

- Escalation paths to staff when needed

Results and Impact

- Minutes, not hours, to understand a call: transcriptions and summaries make phone conversations instantly actionable.

- Sub-minute responses on high-volume social channels, converting attention into structured patient journeys instead of stalled DMs.

- Reduced lead leakage via “uncontacted lead” rules and manager visibility by owner/team.

- Operational clarity: appointments, consents, notes, instructions, and reporting centralized in one system.

- Better decision-making: revenue, SKU performance, discounting, staff activity, and lead/sales trends visible in one place.

Why It Worked

This wasn’t “AI bolted onto a CRM.” It was an operating system approach:

- unify the clinic’s reality (calls, texts, email, IG, scheduling, notes)

- turn unstructured conversations into structured next actions

- keep Salesforce/ModMed as systems of record while making them actually usable day-to-day

- build automation where it removes toil, not where it introduces risk

Krazimo helped Dr. Jason Emer’s practice scale patient engagement without sacrificing the premium experience. By combining a unified operations platform with an AI concierge that can handle high-volume inbound demand, the practice gets faster response times, cleaner follow-ups, and clearer operational control—without ripping out existing systems.